A non-governmental organization specializing in monitoring consumer electronics has reported that AI-powered dolls and children’s toys are no longer just entertainment tools. They have become capable of saying inappropriate things to children and collecting extensive data from the home, threatening their privacy and safety.

The organization explained that its risk assessment revealed fundamental problems that make these toys unsuitable for children, noting that more than a quarter of the products contain inappropriate content, including psychological harm, drugs, and dangerous behaviors.

It added that these toys require intensive data collection and rely on “subscription models that exploit children’s emotional attachments.”

The organization confirmed that some toys use mechanisms to build friendship-like relationships, while simultaneously collecting audio recordings, written texts, and behavioral data, constituting a violation of privacy in children’s personal spaces.

The organization warned against allowing any child under the age of five near these toys and emphasized the need for caution for children between 6 and 12 years old.

It was noted that protecting children from AI is still limited compared to the strict testing traditional toys undergo before being sold, calling for strict controls to regulate these devices and protect children.

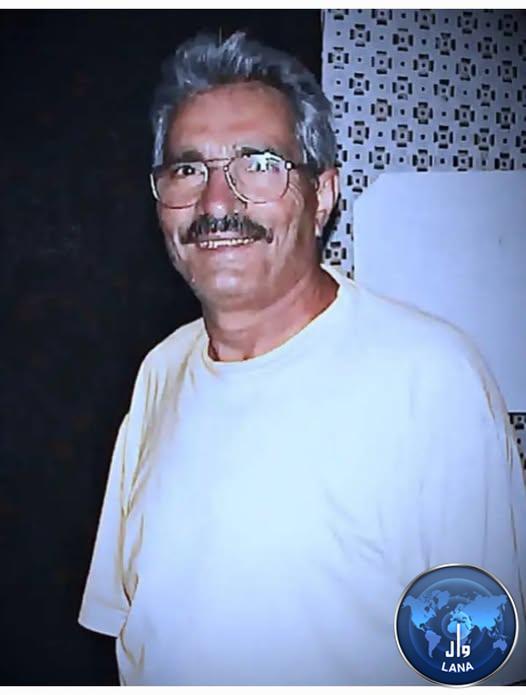

The popularity of AI toys among children has increased, but warnings have been issued about the psychological, behavioral, and privacy risks they entail, especially as companies rely on AI for advanced interaction and sentient characters that may influence children.

It has been pointed out that strict legislation and regulatory oversight are necessary before allowing the widespread use of these toys.